Content

2. Maximum Likelihood/a Posteriori Estimation

3. Markov Chain Monte Carlo for Bayesian Inference

There are two very different approaches of statistical inference from probabilistic systems - frequentist and Bayesian, with very different philosophies [QuantStart Team, 1].

Frequentist statistics tries to eliminate uncertainty by providing estimates and probabilities are the long-run frequency of random events in repeated trials. Bayesian statistics tries to preserve and refine uncertainty by adjusting individual beliefs in light of new evidence.

Thus in the Bayesian interpretation a probability is a summary of an individual's opinion. A key point is that different (intelligent) individuals can have different opinions (and thus different prior beliefs), since they have differing access to data and ways of interpreting it. This philosophy is similar to a random forest, which does not give a definitive value but a collection of values (by Nando de Freita's MCMC lecture [Nando de Freitas]) However, as both of these individuals come across new data that they both have access to, their (potentially differing) prior beliefs will lead to posterior beliefs that will begin converging towards each other, under the rational updating procedure of Bayesian inference.

In order to carry out Bayesian inference, we need to utilise a famous theorem in probability known as Bayes' rule and interpret it in the correct fashion.

A natural example question to ask is "What is the probability of seeing 3 heads in 8 flips (8 Bernoulli trials), given a fair coin?".

The coin flip obeys a binomial distribution, a collection of Bernoulli trials. A Bernoulli trial is a random experiment with only two outcomes, usually labelled as "success" or "failure", in which the probability of the success is given by

Through coin flip experiments (by repeated Bernoulli trials) we will generate some data,

The way to calculate

where:

-

$P(\theta)$ is the prior: This is the strength in our belief of$\theta$ without considering the evidence$D$ . Our prior view on the probability of how fair the coin is. -

$P(\theta|D)$ is the posterior: This is the (refined) strength of our belief of$\theta$ once the evidence$D$ has been taken into account. After seeing 4 heads out of 8 flips, say, this is our updated view on the fairness of the coin. -

$P(D|\theta)$ is the likelihood: This is the probability of seeing the data$D$ as generated by a model with parameter$\theta$ . If we knew the coin was fair, this tells us the probability of seeing a number of heads in a particular number of flips. -

$P(D)$ is the evidence: This is the probability of the data as determined by summing (or integrating) across all possible values of$\theta$ , weighted by how strongly we believe in those particular values of$\theta$ , i.e.

Note since

If we had multiple views of what the fairness of the coin is (but didn't know for sure), then this tells us the probability of seeing a certain sequence of flips for all possibilities of our belief in the coin's fairness.

Coin-flipping example I: A fair coin [QuantStart Team, 1]

In this example we are going to consider multiple coin-flips of a coin with unknown fairness. Now, suppse we have data

data = stats.bernoulli.rvs(0.5, size=1000)Our goal is to learn from data and posterior density. We use a Beta distribution as prior. The Beta prior is called a conjugate prior, since the posterior distributions

Once we know the number of heads within the number of trails, the posterior

Uisng Scipy, we can model the posterior density as

x = np.linspace(0, 1, 100)

y = stats.beta.pdf(x, heads, N - heads)Before any trials, 0 trials and 0 heads, our posterior density is a uniform distribution. We can interpret it as which due to the unknown fairness, the uniform distribution states that each level of fairness (or each value of

Next panel shows 2 trials and 1 head. By this data, we have symmetric posterior density pattern, but

Here we borrow the code from the blog [QuantStart Team, 1] shown here too.

Now what will we see if the data implicitly hints the coin is unfair? Suppose we have data generated from 1000 Bernoulli trials but

data = stats.bernoulli.rvs(0.8, size=1000)By the similar procesures, we can see the posterior is learned to have peak nearby

In Bayesian framework, maximum Likelihood Estimation (MLE) and Maximum A Posteriori (MAP), are both methods for estimating some variable in the setting of probability distributions or graphical models [Agustinus Kristiadi].

In short, mathmatically a MLE model is determined by

whereas for MAP, a model is determined by

We actually use MLE without knowing it in our common life. For example, when fitting a Gaussian to our dataset, we immediately take the sample mean and sample variance, and use it as the parameter of our Gaussian. This is MLE, as, if we take the derivative of the Gaussian function with respect to the mean and variance and setting the derivative to zero [Agustinus Kristiadi]. This step is to maximize the likelihood. Another example is Naive Bayes spam filter. We can comute the likelihood of a specific word appearing in a spam. Thus, given an email, the probability of the email being spam is the naive mulitplication of the individual likelihoods whose words appear in the email.

Most of the optimization in Machine Learning and Deep Learning (neural net, etc), could be interpreted as MLE. Given model parameters

which gives us an estimate on

which gives us a distribution of

To provide more concrete examples, in linear regression, we make an assumption that the likelihood is a normal distribution (both

then the likelihood is

Note that maximizing the likelihood functions is equal to maximizing the log of these functions. Therefore, We usually denote MLE as

Thus the problem is equivalent to minimizing the cost functions

On the other hand, in logistic regression (binary classification), the likelihood is a Bernoulli distribution. Designate the hypothesis function

is the probability of

a

Then similarly, we convert maximizing the log of the likelihood to optimizing the cost functions

On the other hand, MAP usually comes up in Bayesian setting. It works on a posterior distribution

Using the log trick, we can rewrite the above expression as

What it means is that, the likelihood is now weighted with some weight coming from the prior [Agustinus Kristiadi]. When the prior is uniform, i.e. we assign equal weights everywhere, on all possible values of the

Instead, if we implement Gaussian distribution to the prior

which can be identify to a L2 (Ridge) regularization term if

commonly seen in regression [Nando de Freitas] and

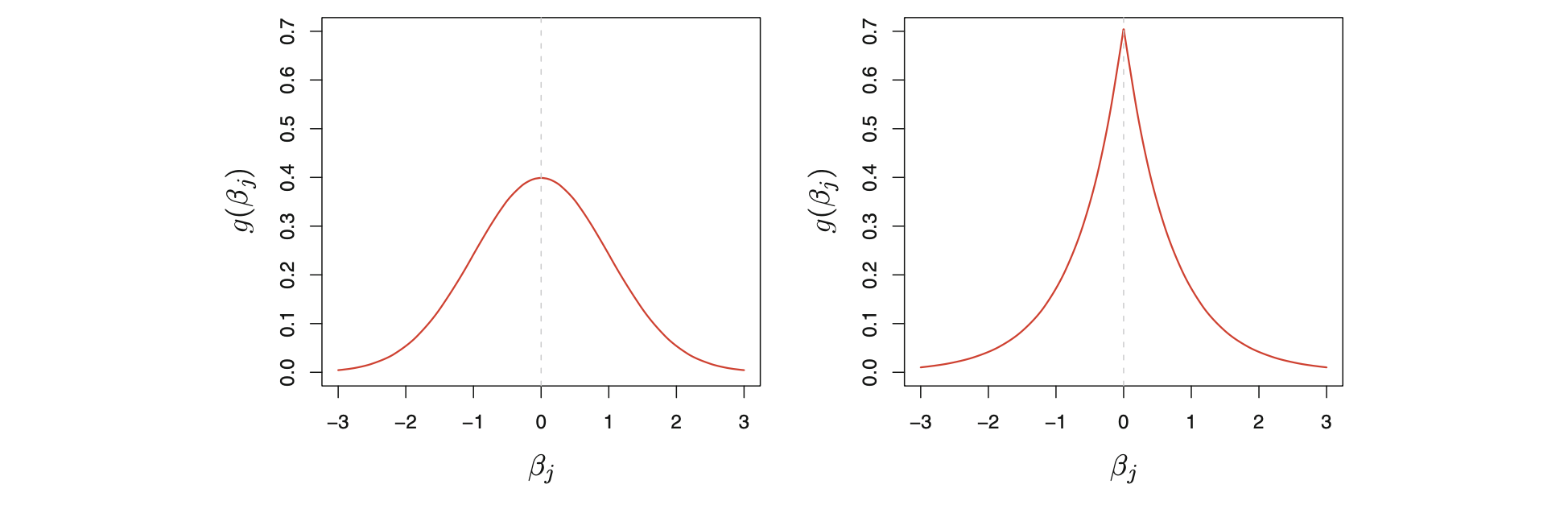

On the other hand, L1 (Lasso) is the posterior mode for θ under a double-exponenetial prior [Stathis Kamperis].

Below (credit from book: An Introduction to Statistical Learning), Left: Gaussian prior (for ridge). Right: double-exponential prior (for lasso).

MLE is that it overfits the data, meaning that the variance of the parameter estimates is high, or put another way, that the outcome of the parameter estimate is sensitive to random variations in data (by James McInerney, [Quora]). Maximizing MAP can be regarded as adding regularisation to MLE.

The prior beliefs about the parameters determine what this random process looks like. If the prior beliefs are strong, then the observed data have relatively little impact on the parameter estimates, (i.e., low variance but high bias), while if the prior beliefs are weak, then the outcome is more like standard MLE (i.e., low bias but high variance).

First let's come back to the coin-flip problem; the outcome is either head or tail. The likelihood of each sample is a Bernoulli distribution (or entire dataset is a binomial distribution) [Barnabás Póczos & Aarti Singh]

Question: suppose we observed data X = {1,1,1,1,1,1} and the sample comes from iid Bernoulli distribution, what is a good guess of

Here we denote

To do it, we differentiate

Then we obtain the MLE estimate [Cross Validate: How to derive the likelihood function for binomial distribution for parameter estimation?]

which is consistent with our intution.

For MAP, we need to look for Bayesian posterior

if we implement Beta distribution (which is a conjugate prior for binomal distribution) as part of the prior

By probability normalization, we will have the following relation

Then we can see the posterior and prior has same form

which can be simply rewritten as

Given the data, the expectation value on

So

From the above, using:

-

$B(2,2)$ ,$\bar{\theta} = 8/10$ . -

$B(1, 1)$ ,$\bar{\theta} = 7/8$ . -

$B(1, 0.01)$ ,$\bar{\theta} = 7/7.01$ .

The blog [Suzanna Sia] has more description on Bayesian inference for binomial distributions with Python code.

Second example is to extend binomial outcome to multinomial. Let's say if now we have a dice, then the likelihood function becomes

If we use Dirichlet distribution as the prior,

then we have the posterior as Dirichlet distribution

and we still can maximize the term to estimate

In the above coin-flip exmaple, we infer a binomial proportion using the concept of conjugate priors. However, not all models can make use of conjugate priors. In such cases, calculation of the posterior distribution would need to be approximated numerically. Recall

To infer a coin flippinh, we have one varable and use the conjugate prior such that both prior and posterior have same probability distribution.

But in reality, both

To be more concrete, let's look at Bayesian logisitic regression [Nando de Freitas].

Given data y = 0, 1 given input

here

Our goal is to look for find optimal values of

The optimal values of

which is equivalent to minimizing the cross-entropy loss function using gradient descent in logistic regression.

On the other hand, We can image it will become very difficult to solve the integral since if we have 100 features, there are 100 variables in the integral! In Bayesian framework, we are also interested in evaluating expectation value:

As above example, we have difficulty to perform high-dimensional integral. The motivation behind Markov Chain Monte Carlo methods is that they perform an intelligent search of the parameters, θ, within a high dimensional space and thus Bayesian Models in high dimensions become tractable. It is to sample from the posterior distribution by combining a "random search" (the Monte Carlo aspect) with a mechanism for intelligently "jumping" around, but in a manner that ultimately doesn't depend on where we started from (the Markov Chain aspect). Hence Markov Chain Monte Carlo methods are memoryless searches performed with intelligent jumps [QuantStart Team, 2]. Here Ben Shaver provided a good introudction. [Ben Shaver]

Markov Chain Monte Carlo is a family of algorithms, rather than one particular method. There are Metropolis, Metropolis-Hastings, the Gibbs Sampler, Hamiltonian MCMC and the No-U-Turn Sampler (NUTS). The main difference among the MCMC algorithms is how you update the

The basic recipes for most MCMC algorithms tend to follow this pattern:

1. Begin the algorithm at the current position θ_current in parameter space

2. Propose a "jump" to a new position θ_new in parameter space

3. Accept or reject the jump probabilistically using the prior information and available data

If the jump is accepted, move to the new position θ_current = θ_new and return to step 1

If the jump is rejected, stay unchanged in θ_current and return to step 1

4. After a set number of jumps have occurred, return all of the accepted positions

The Metropolis algorithm uses a normal distribution to propose a jump. This normal distribution has a mean value μ which is equal to the current position and takes a updating step (the blog [QuantStart Team, 2] uses "proposal width") for its standard deviation σ.

The updating step is a parameter of the Metropolis algorithm and has a significant impact on convergence. A larger step jumps parameter further but is easy to miss higher probability areas. However, a smaller step could take longer to converge.

[Barnabás Póczos & Aarti Singh] MLE, MAP, Bayes classifications

[Ben Shaver] A Zero-Math Introduction to Markov Chain Monte Carlo Methods

[Brian Keng] A Probabilistic Interpretation of Regularization

[Mary Mcglohon] MLE, MAP, AND NAIVE BAYES

[Nando de Freitas] Machine learning - Importance sampling and MCMC I

[QuantStart Team, 1] Bayesian Statistics: A Beginner's Guide

[QuantStart Team, 2] Markov Chain Monte Carlo for Bayesian Inference - The Metropolis Algorithm

[Stathis Kamperis] Bayesian connection to LASSO and ridge regression

[Suzanna Sia] Closed form Bayesian Inference for Binomial distributions