| title | description | Keywords | services | documentationcenter | author | manager | editor | tags | ms.assetid | ms.service | ms.devlang | ms.topic | ms.tgt_pltfrm | ms.workload | ms.date | ms.author |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

Azure HDInsight Tools - Use Visual Studio Code for Hive, LLAP or pySpark | Microsoft Docs |

Learn how to use the Azure HDInsight Tools for Visual Studio Code to create and submit queries and scripts. |

VS Code,Azure HDInsight Tools,Hive,Python,PySpark,Spark,HDInsight,Hadoop,LLAP,Interactive Hive,Interactive Query |

HDInsight |

jejiang |

jgao |

azure-portal |

HDInsight |

na |

article |

na |

big-data |

10/27/2017 |

jejiang |

Learn how to use the Azure HDInsight Tools for Visual Studio Code (VS Code) to create and submit Hive batch jobs, interactive Hive queries, and pySpark scripts. The Azure HDInsight Tools can be installed on the platforms that are supported by VS Code. These include Windows, Linux, and macOS. You can find the prerequisites for different platforms.

The following items are required for completing the steps in this article:

- A HDInsight cluster. To create a cluster, see Get started with HDInsight.

- Visual Studio Code.

- Mono. Mono is only required for Linux and macOS.

After you have installed the prerequisites, you can install the Azure HDInsight Tools for VS Code.

To Install Azure HDInsight tools

-

Open Visual Studio Code.

-

In the left pane, select Extensions. In the search box, enter HDInsight.

-

Next to Azure HDInsight tools, select Install. After a few seconds, the Install button changes to Reload.

-

Select Reload to activate the Azure HDInsight tools extension.

-

Select Reload Window to confirm. You can see Azure HDInsight tools in the Extensions pane.

Create a workspace in VS Code before you can connect to Azure.

To open a workspace

-

On the File menu, select Open Folder. Then designate an existing folder as your work folder or create a new one. The folder appears in the left pane.

-

On the left pane, select the New File icon next to the work folder.

-

Name the new file with either the .hql (Hive queries) or the .py (Spark script) file extension. Notice that an XXXX_hdi_settings.json configuration file is automatically added to the work folder.

-

Open XXXX_hdi_settings.json from EXPLORER, or right-click the script editor to select Set Configuration. You can configure login entry, default cluster, and job submission parameters as shown in the sample in the file. You also can leave the remaining parameters empty.

Before you can submit scripts to HDInsight clusters from VS Code, you need connect to your Azure account.

To connect to Azure

-

Create a new work folder and a new script file if you don't already have them.

-

Right-click the script editor, and then, on the context menu, select HDInsight: Login. You can also enter Ctrl+Shift+P, and then enter HDInsight: Login.

-

To sign in, follow the sign-in instructions in the OUTPUT pane.

After you're connected, your Azure account name is shown on the status bar at the bottom left of the VS Code window.

[!NOTE] Because of a known Azure authentication issue, you need to open a browser in private mode or incognito mode. If your Azure account has two factors enabled, we recommended using phone authentication instead of PIN authentication.

-

Right-click the script editor to open the context menu. From the context menu, you can perform the following tasks:

- Log out

- List clusters

- Set default clusters

- Submit interactive Hive queries

- Submit Hive batch scripts

- Submit interactive PySpark queries

- Submit PySpark batch scripts

- Set configurations

To link a cluster

You can link a normal cluster by using Ambari managed username, also link a security hadoop cluster by using domain username (such as: user1@contoso.com).

-

Open the command palette by selecting CTRL+SHIFT+P, and then enter HDInsight: Link a cluster.

-

Enter HDInsight cluster URL -> input Username -> input Password -> select cluster type -> it shows success info if verification passed.

[!NOTE] We use the linked username and password if the cluster both logged in Azure subscription and Linked a cluster.

-

You can see a Linked cluster by using command List cluster. Now you can submit a script to this linked cluster.

-

You also can unlink a cluster by inputing HDInsight: Unlink a cluster from command palette.

To test the connection, you can list your HDInsight clusters:

To list HDInsight clusters under your Azure subscription

-

Open a workspace, and then connect to Azure. For more information, see Open HDInsight workspace and Connect to Azure.

-

Right-click the script editor, and then select HDInsight: List Cluster from the context menu.

-

The Hive and Spark clusters appear in the Output pane.

-

Open a workspace and connect to Azure. See Open HDInsight workspace and Connect to Azure.

-

Right-click the script editor, and then select HDInsight: Set Default Cluster.

-

Select a cluster as the default cluster for the current script file. The tools automatically update the configuration file XXXX_hdi_settings.json.

-

Open the command palette by selecting CTRL+SHIFT+P.

-

Enter HDInsight: Set Azure Environment.

-

Select one way from Azure and AzureChina as your default login entry.

-

Meanwhile, the tool has already saved your default login entry in XXXX_hdi_settings.json. You also directly update it in this configuration file.

With HDInsight Tools for VS Code, you can submit interactive Hive queries to HDInsight interactive query clusters.

-

Create a new work folder and a new Hive script file if you don't already have them.

-

Connect to your Azure account, and then configure the default cluster if you haven't already done so.

-

Copy and paste the following code into your Hive file, and then save it.

SELECT * FROM hivesampletable;

-

Right-click the script editor, and then select HDInsight: Hive Interactive to submit the query. The tools also allow you to submit a block of code instead of the whole script file using the context menu. Soon after, the query results appear in a new tab.

-

RESULTS panel: You can save the whole result as CSV, JSON, or Excel file to local path, or just select multiple lines.

-

MESSAGES panel: When you select Line number, it jumps to the first line of the running script.

-

Running the interactive query takes much less time than running a Hive batch job.

-

Create a new work folder and a new Hive script file if you don't already have them.

-

Connect to your Azure account, and then configure the default cluster if you haven't already done so.

-

Copy and paste the following code into your Hive file, and then save it.

SELECT * FROM hivesampletable;

-

Right-click the script editor, and then select HDInsight: Hive Batch to submit a Hive job.

-

Select the cluster to which you want to submit.

After you submit a Hive job, the submission success info and jobid appears in the OUTPUT panel. The Hive job also opens WEB BROWSER, which shows the real-time job logs and status.

Submitting interactive Hive queries takes much less time than submitting a batch job.

HDInsight Tools for VS Code also enables you to submit interactive PySpark queries to Spark clusters.

-

Create a new work folder and a new script file with the .py extension if you don't already have them.

-

Connect to your Azure account if you haven't yet done so.

-

Copy and paste the following code into the script file:

from operator import add lines = spark.read.text("/HdiSamples/HdiSamples/FoodInspectionData/README").rdd.map(lambda r: r[0]) counters = lines.flatMap(lambda x: x.split(' ')) \ .map(lambda x: (x, 1)) \ .reduceByKey(add) coll = counters.collect() sortedCollection = sorted(coll, key = lambda r: r[1], reverse = True) for i in range(0, 5): print(sortedCollection[i])

-

Highlight these scripts. Then right-click the script editor and select HDInsight: PySpark Interactive.

-

If you haven't already installed the Python extension in VS Code, select the Install button as shown in the following illustration:

-

Install the Python environment in your system if you haven't already.

-

For Windows, download and install Python. Then make sure

Pythonandpipare in your system PATH. -

For instructions for macOS and Linux, see Set up PySpark interactive environment for Visual Studio Code.

-

-

Select a cluster to which to submit your PySpark query. Soon after, the query result is shown in the new right tab:

-

The tool also supports the SQL Clause query.

The submission status appears on the left of the bottom status bar when you're running queries. Don't submit other queries when the status is PySpark Kernel (busy).

The submission status appears on the left of the bottom status bar when you're running queries. Don't submit other queries when the status is PySpark Kernel (busy).

Note

The clusters can maintain session information. The defined variable, function and corresponding values are kept in the session, so they can be referenced across multiple service calls for the same cluster.

-

Create a new work folder and a new script file with the .py extension if you don't already have them.

-

Connect to your Azure account, if you haven't already done so.

-

Copy and paste the following code into the script file:

from __future__ import print_function import sys from operator import add from pyspark.sql import SparkSession if __name__ == "__main__": spark = SparkSession\ .builder\ .appName("PythonWordCount")\ .getOrCreate() lines = spark.read.text('/HdiSamples/HdiSamples/SensorSampleData/hvac/HVAC.csv').rdd.map(lambda r: r[0]) counts = lines.flatMap(lambda x: x.split(' '))\ .map(lambda x: (x, 1))\ .reduceByKey(add) output = counts.collect() for (word, count) in output: print("%s: %i" % (word, count)) spark.stop()

-

Right-click the script editor, and then select HDInsight: PySpark Batch.

-

Select a cluster to which to submit your PySpark job.

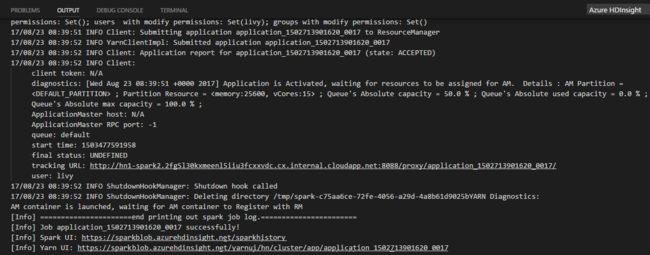

After you submit a Python job, submission logs appear in the OUTPUT window in VS Code. The Spark UI URL and Yarn UI URL are shown as well. You can open the URL in a web browser to track the job status.

HDInsight for VS Code supports the following features:

-

IntelliSense auto-complete. Suggestions pop up for keyword, methods, variables, and so on. Different icons represent different types of objects.

-

IntelliSense error marker. The language service underlines the editing errors for the Hive script.

-

Syntax highlights. The language service uses different colors to differentiate variables, keywords, data type, functions, and so on.

- HDInsight for VS Code: Video

- Use Azure Toolkit for IntelliJ to debug Spark applications remotely through VPN

- Use Azure Toolkit for IntelliJ to debug Spark applications remotely through SSH

- Use HDInsight Tools for IntelliJ with Hortonworks Sandbox

- Use HDInsight Tools in Azure Toolkit for Eclipse to create Spark applications

- Use Zeppelin notebooks with a Spark cluster on HDInsight

- Kernels available for Jupyter notebook in Spark cluster for HDInsight

- Use external packages with Jupyter notebooks

- Install Jupyter on your computer and connect to an HDInsight Spark cluster

- Visualize Hive data with Microsoft Power BI in Azure HDInsight

- Visualize Interactive Query Hive data with Power BI in Azure HDInsight.

- Set Up PySpark Interactive Environment for Visual Studio Code

- Use Zeppelin to run Hive queries in Azure HDInsight

- Spark with BI: Perform interactive data analysis using Spark in HDInsight with BI tools

- Spark with Machine Learning: Use Spark in HDInsight for analyzing building temperature using HVAC data

- Spark with Machine Learning: Use Spark in HDInsight to predict food inspection results

- Website log analysis using Spark in HDInsight