This Scrapy project scrapes store information, product catalogs, and product details from Despar online stores.

- Scraping Strategy

- Project Structure

- Data Outputs

- Core Spiders

- Key Endpoints

- Installation

- How to Run

- Output Samples

- Recommended Improvements

-

Initial Website Access

- Navigate to the DESPAR homepage and identify all service types (Home Delivery and In-Store Pickup)

- Detect embedded JSON data containing store information in page scripts

-

JSON Data Extraction

- Extract five critical JSON objects:

atHomeCitiesJson(Cities offering home delivery)atHomeZipCodeItems(Zip codes for delivery areas)atStoreCitiesJson(Cities with physical stores)storesJson(Detailed store information)stores4MapsJson(Geographical data)

- Extract five critical JSON objects:

-

Data Normalization

- Process physical stores with complete address/coordinate data

- Treat home delivery zones as virtual stores

- Create unified store records with service type classification

-

Category Tree Discovery

- Identify three category levels:

- Main Category

- Sub Category

- Category

- Identify three category levels:

-

URL Generation

- Create canonical URLs for all categories

- Store complete category paths

- Lightweight Product Listing

- Extract basic product information from category pages:

- Product IDs and names

- Current and original prices

- Promotion indicators

- Thumbnail images

- Handle pagination systematically

- Extract basic product information from category pages:

- Comprehensive Product Details

- For each identified product:

- Extract high-resolution images

- Collect full product descriptions

- For each identified product:

despar_scraper/

├── spiders/

│ ├── 1_stores.py # Scrapes store locations

│ ├── 2_product_list.py # Scrapes categories and product listings

│ └── 3_product_details.py # Scrapes product details

├── items.py # Data structure definitions

├── pipelines.py # Data processing pipeline

└── settings.py # Scrapy configuration

data/

├── json/

│ ├── stores.json # Store locations data

│ ├── categories.json # Product categories

│ ├── product_list.json # Basic product info

│ ├── promos.json # Promotion details

│ └── product_details.json # product details

├── csv/

│ └── stores.csv # Store locations (CSV format)

└── log/ # Spider execution logs

│ ├── stores.log # execution logs

│ ├── product_list.log # execution logs

│ ├── product_details.log # execution logs

-

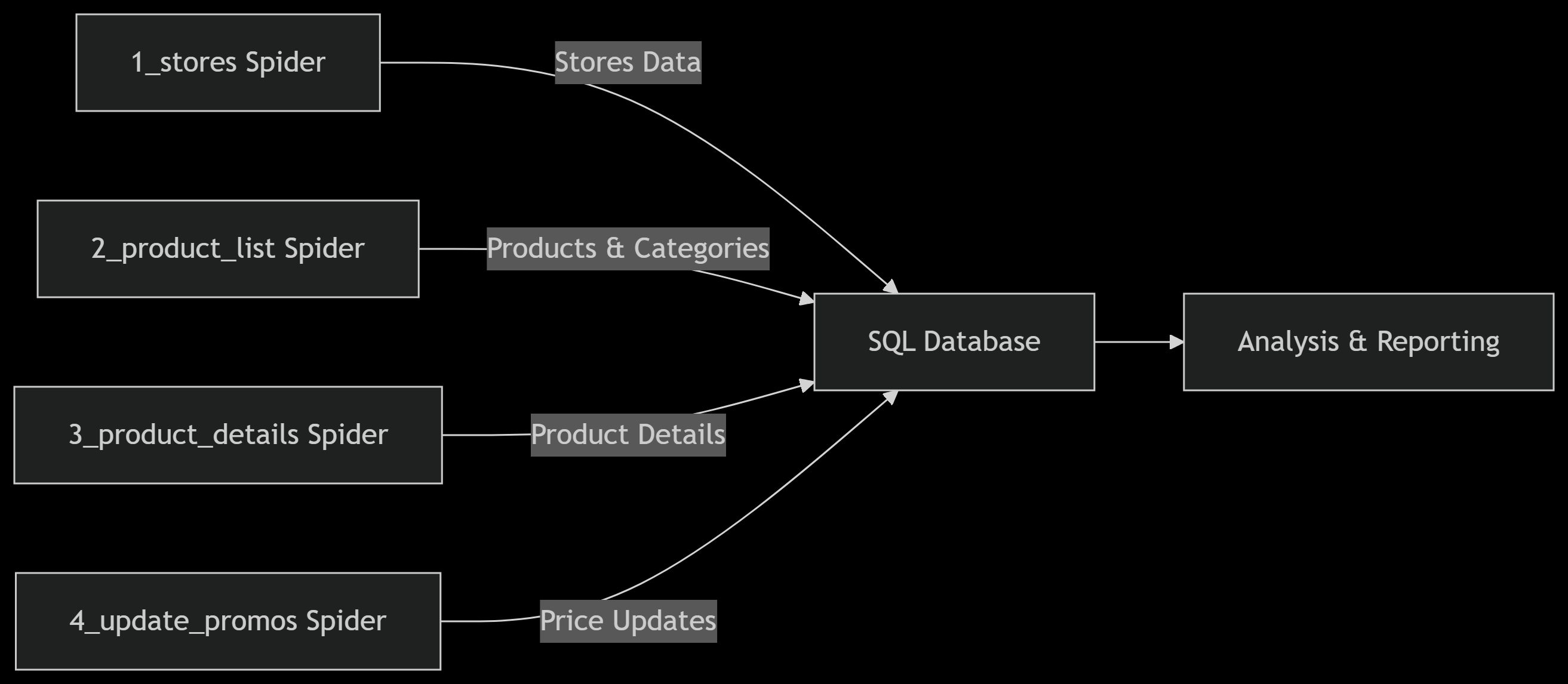

Store Information Spider (

1_stores.py)- Collects physical store locations and delivery zones

- Extracts geo-coordinates, addresses, and service types

-

Product Catalog Spider (

2_product_list.py)- Scrapes category hierarchies and product listings

- Captures pricing, brands, and promotional data

-

Product Details Spider (

3_product_details.py)- Gathers product descriptions and image URLs

- Extracts technical specifications and attributes

- Store locator:

https://{domain}/spesa-ritiro-negozio/{store_slug} - Home delivery:

https://{domain}/spesa-consegna-domicilio/{zip_code} - Category page:

{store_url}/{category_slug} - Product page:

{store_url}/prodotto/{product_id} - Product API:

{store_url}/ajax/productsPagination

pip install scrapyScrapes physical stores and delivery zones:

# For main domain:

scrapy crawl 1_stores -a store="https://shop.despar.com"

# For Sicilia domain:

scrapy crawl 1_stores -a store="https://shop.desparsicilia.it"Scrapes categories, products, and promotions:

# Scrape all stores with first 5 categories each:

scrapy crawl 2_product_list -a category_limit=5

# Scrape first 2 stores only:

scrapy crawl 2_product_list -a store_limit=2Scrapes images and descriptions:

# Scrape details for first 100 products:

scrapy crawl 3_product_details -a product_limit=100| Spider | Arguments | Description |

|---|---|---|

1_stores |

store |

Target store domain (required) |

2_product_list |

store_limit |

Max stores to process (0=all) |

category_limit |

Max categories per store (0=all) | |

store_list_file |

Stores json file (data/json/stores.json) |

|

3_product_details |

product_limit |

Max products to scrape (0=all) |

product_list_file |

Product List json file (data/json/product_list.json) |

{

"pk": "https://shop.despar.com;spesa-ritiro-negozio;corato-interspar-via-gravina-62;33774",

"domain": "https://shop.despar.com",

"type_": "spesa-ritiro-negozio",

"slug": "corato-interspar-via-gravina-62",

"id_": "33774",

"name": "Corato, Interspar via Gravina 62",

"address": "Corato, Interspar via Gravina 62 - Corato",

"lat": 41.139118,

"long": 16.415059,

"city_id": 2322,

"city_name": "Corato",

"province_id": "9",

"province_name": "Bari",

"url": "https://shop.despar.com/spesa-ritiro-negozio/corato-interspar-via-gravina-62"

}{

"main_category": "Frutta e Verdura",

"sub_category": "Frutta",

"sub_category_slug": "frutta_277",

"sub_category_id": "277",

"category": "Agrumi",

"category_slug": "agrumi_310",

"category_id": "310",

"category_hierarchy": "Frutta e Verdura > Frutta > Agrumi",

"store_pk": "https://shop.despar.com;spesa-ritiro-negozio;corato-interspar-via-gravina-62;33774"

}{

"id_": "233881",

"name": "YOGURT DA BERE S/LAT. MILA 200G FRAGOLA",

"brand": "",

"price": "0,85",

"old_price": "",

"meta": "0,20 kg - 4,25 € al kg",

"icons": [

"Senza Glutine",

"Senza Lattosio"

],

"img": "https://restorecms.blob.core.windows.net/mai/products/images/assets/300x300x75/233881.jpg",

"category_id": "267",

"category_hierarchy": "Latte e Latticini > Yogurt e dessert > Yogurt e probiotici",

"store_pk": "https://shop.despar.com;spesa-ritiro-negozio;corato-interspar-via-gravina-62;33774",

"url": "https://shop.despar.com/spesa-ritiro-negozio/corato-interspar-via-gravina-62/prodotto/233881"

}{

"product_id": "233848",

"type_": "discount",

"value": "-7%",

"end_date": "fino al 08/06",

"store_pk": "https://shop.despar.com;spesa-ritiro-negozio;corato-interspar-via-gravina-62;33774"

}-

Run spiders sequentially (stores → product list → details)

-

Add validations and processing to the data in the Pipeline to match the other projects

-

Make SQL pipeline to save the data into a DataBase (using SQLAlchemy or raw SQL)

-

Make a

4_price_updatespider for regular use, inputcategories.jsonand get all ( prices and promotions only )