How can we better develop educational materials to meet kids where they are?

- Is it worth it to spend money to advertise to youth for political campaigns - are they engaging with current events?

- What politics & policies are The Youth™ talking about & why?

- to analyze how age/youth impacts political indoctrination and participation

- to track social impacts of political events

- to understand colloquial knowledge of political concepts

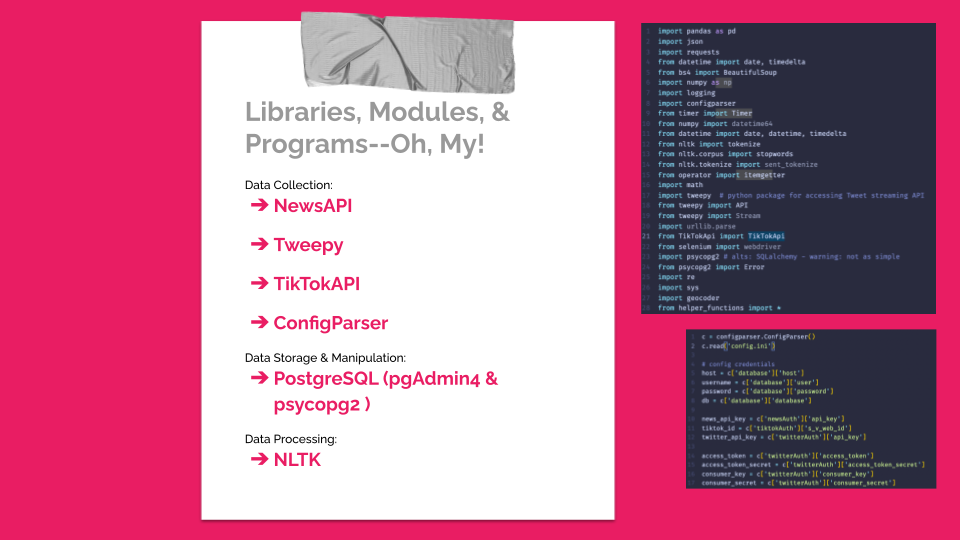

- Use NewsAPI to find top news by day

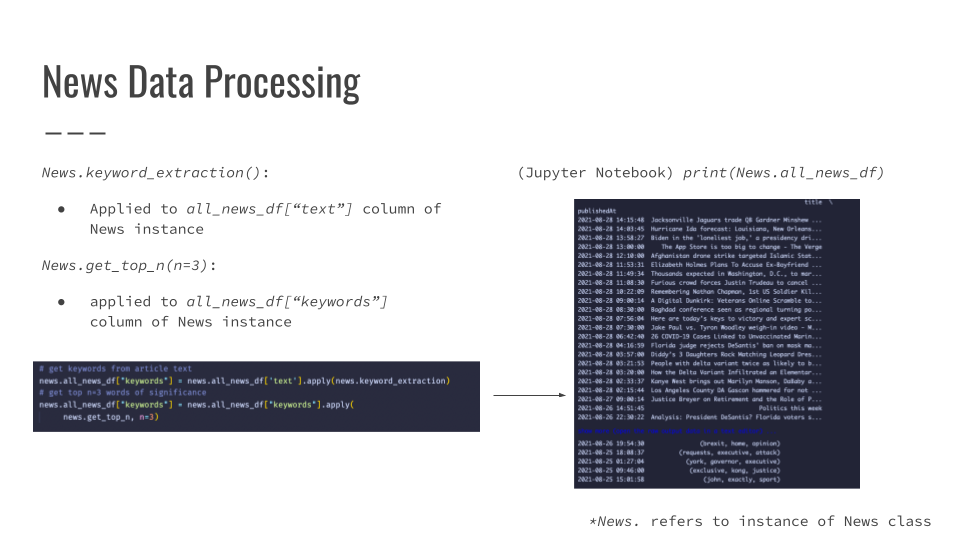

- Parse news story title & article into individual words/phrases

- Count most important individual words & phrases

- Use top 3 most important words & phrases to search TikTok & Twitter

- Count number of tweets & TikToks mentioning key words & phrases

config.ini should have the following layout and info:

[database]

host = <hostname>

database = <db name>

user = <db username>

password = <db password>

[newsAuth]

api_key = <NewsAPI.org API key>

[tiktokAuth]

s_v_web_id = <s_v_web_id>

tt_web_id = <tt_web_id>

[twitterAuth]

access_token = <access_token>

access_token_secret = <access_token_secret>

consumer_key = <consumer_key>

consumer_secret = <consumer_secret>

Note: headers and keys/variables in config.ini file don't need to be stored as strings, but when calling them in program, enclose references with quotes

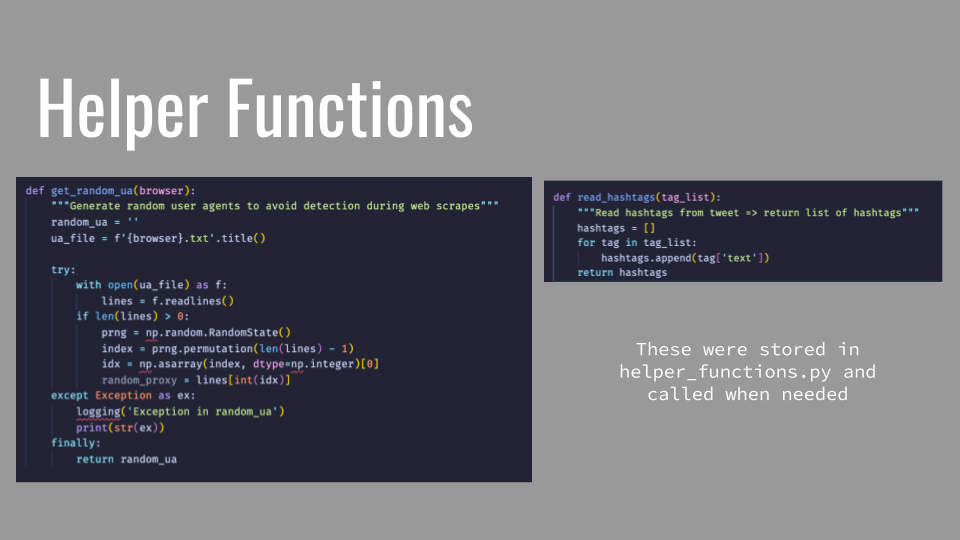

Clone the following url into your project directory using Git or checkout with SVN: https://github.com/tamimibrahim17/List-of-user-agents.git. These .txt files contain User Agents and are specified by browser (shout out Timam Ibrahim!). They will be randomized to avoid detection by web browsers.

- Go to the TikTok website & login

- If using Google Chrome, open the developer console

- Go to the 'Application' tab

- Find & click 'Cookies' in the left-hand panel →

- On the resulting screen, look for

s_v_web_idandtt_web_idunder the 'name' column

Find more information about .ini configuration files in Python documentation: https://docs.python.org/3/library/configparser.html

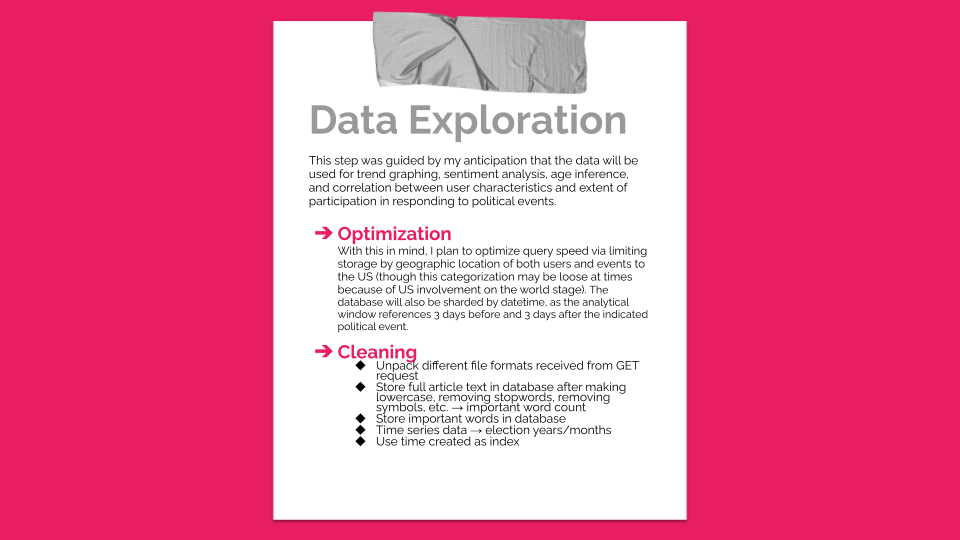

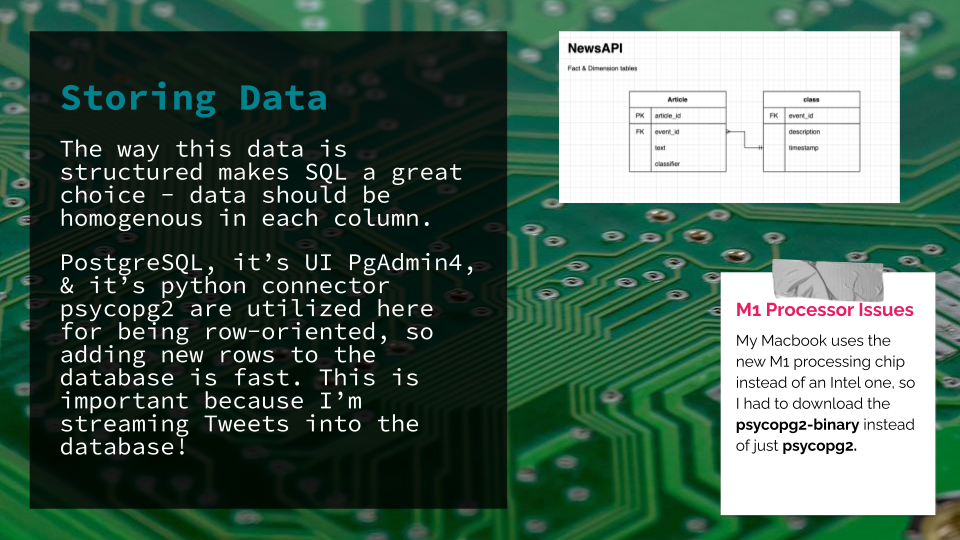

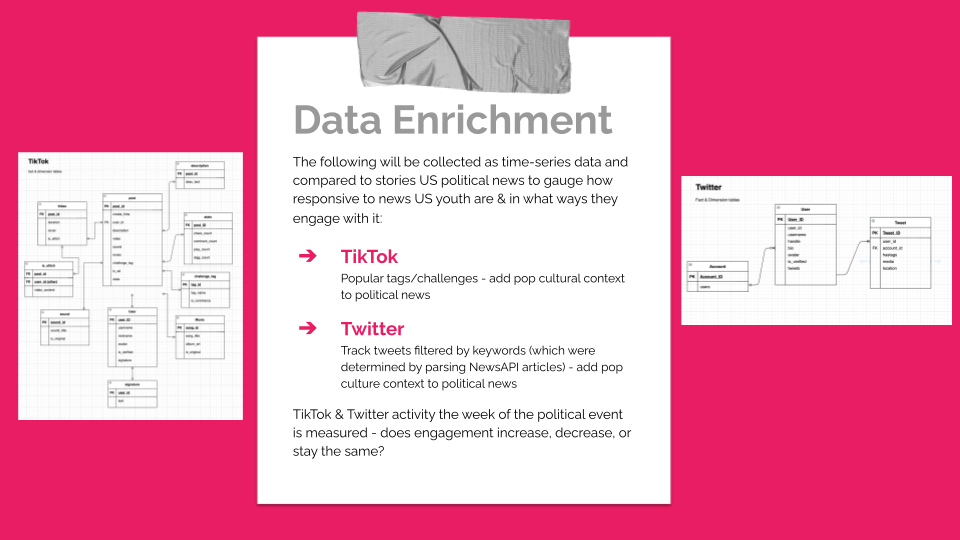

This step was guided by my anticipation that the data will be used for trend graphing, sentiment analysis, age inference, and correlation between user characteristics and extent of participation in responding to political events. With this in mind, I plan to optimize query speed via limiting storage by geographic location of both users and events to the US (though this categorization may be loose at times because of US involvement on the world stage). My PostgreSQL database will also be sharded by datetime, as the analytical window references 3 days before and 3 days after the political event of interest.

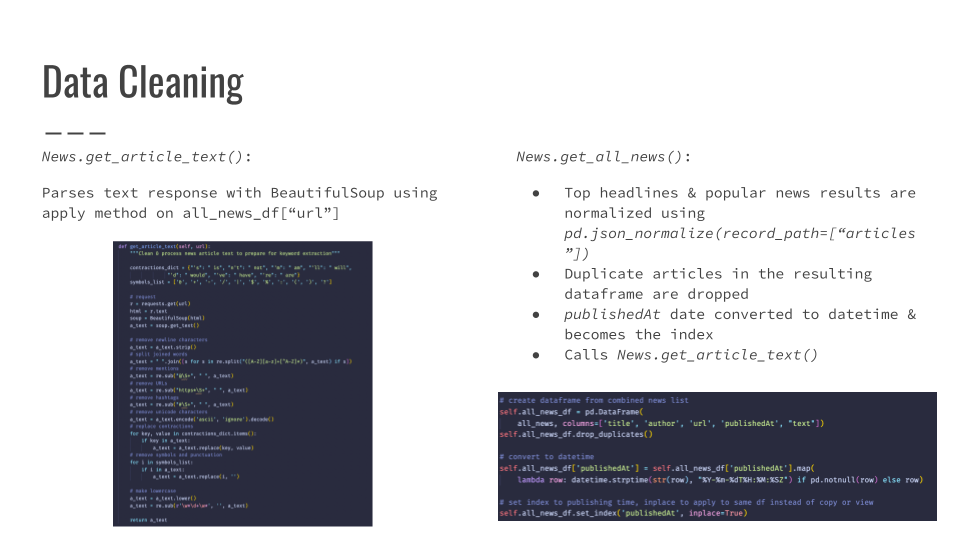

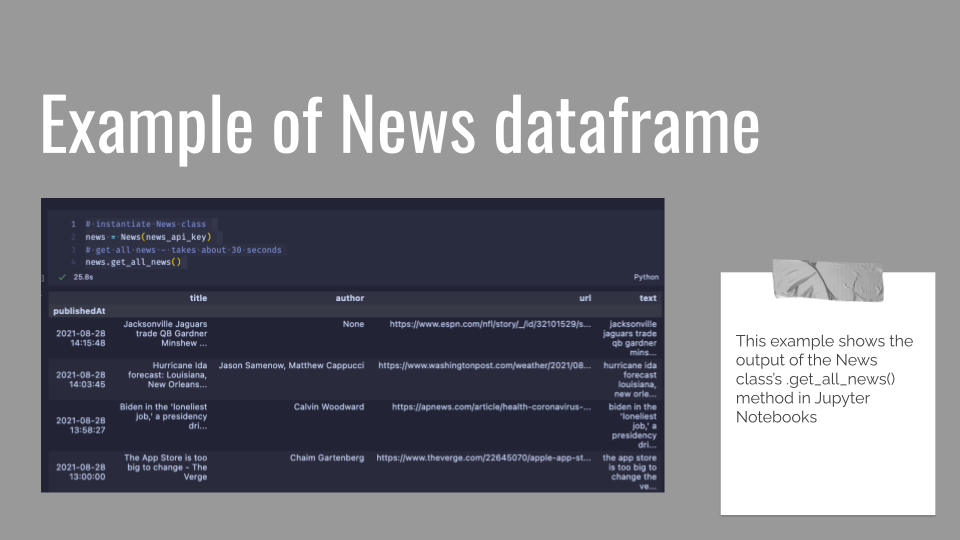

At this point, I've found that my GET requests for popular news articles return various formats which must be converted, unpacked, or otherwise transformed. Because of this added step, I opted to keep the article text in a separate table within the database. The article text in the news table will serve as data sources from which to extract our top 3 keywords (per article) using Term Frequency-Inverse Document Frequency (TF-IDF) calculations. These keywords will be applied to our searches for related TikToks and tweets. For some resources on this, check out

TF-IDF in the real world and a step-by-step guide by Prachi Prakash.

With data from social media adding a pop-cultural context to political news, we inch closer to an understanding of TikTok and Twitter as novel forms of youth political engagement!

This project uses Tweepy's tweet search method to search for tweets within the past seven (7) days using the keywords produced from the .get_all_news() method. A separate Tweepy Stream Listener subclass catches tweets (statuses) that contain our keywords of interest as they are tweeted. The max stream rate for Twitter's API (upon which Tweepy is based) is 450.

application.conf file should look like:

com.ram.batch {

spark {

app-name = <app name>

master = "local"

log-level = "INFO"

}

postgres {

url = "postgresql://localhost/<db name>"

username = <db user>

password = <db pwd>

}

}

Note: these are strings and must be enclosed in quotation marks.

- Make sure you customize your connection string url to the database you use

- The tweetStream Python class invoked in my pipeline includes a Kafka broker:

self.producer = KakfaProducer(bootstrap_servers='localhost') - The broker & the Kafka streaming application are two separate files & entities

Scala applications require 'application'.sbt files that include the name of the app, the version, the scalaVersion, and library dependencies:

```

name := "Tweet Stream"

version := "1.0"

scalaVersion := "3.0.2"

libraryDependencies += "org.apache.spark" %% "spark-core" % "3.1.1"

```

- Find your Spark version by using

spark-submit --versionon the command line.

M1 Processor Issues

- I had to find a compatible SDK to install sbt:

curl -s "https://get.sdkman.io" | zsh source "$HOME/.sdkman/bin/sdkman-init.sh" sdk version sdk install java sdk install sbt sbt compile - Then finally run

sbt compileon the command line from my project directory.

- Make sure any json file that is being used to store tweets is opened with the 'a' designator for 'append' or else each tweet will overwrite the last

- If sbt won't compile, ensure that your .sbt file dependencies are the correct versions

- Make sure your application directory mirrors what is found in the Spark documentation so it can compile properly.

This project uses Avilash Kumar's TikTokAPI. Refer to their GitHub for further information.

- TBA