GLTFLoader - De-duplicate BufferGeometry#12718

Conversation

|

This looks great to me, thanks. I'm happy with having this on by default, and frankly not even sure the option of turning it off is necessary. Is there a complete sample model you could share? Just as an attachment in a PR comment is fine. |

|

|

||

| } | ||

| } | ||

|

|

There was a problem hiding this comment.

Probably, if you check if ( keysA.length !== keysB.length ) return false; you could remove for ( i = 0; i < keysB.length; i++ ) { ... }?

There was a problem hiding this comment.

I think both would be needed, in case one object has extra attributes that don't appear in the other. In that case they should be treated as two separate BufferGeometries.

There was a problem hiding this comment.

in case one object has extra attributes that don't appear in the other.

I think keysA.length doesn't equal to keysB.length in that case.

There was a problem hiding this comment.

Ah yes , I misread your comment. 👍

|

I vote for default (and always on). |

|

Can we use hash, rather than array, for the faster search? For example, key can be like this (it can be more optimized) Also, |

|

The hash would also have to take into account the sorting of the keys. I'm not sure the code is really much simpler and I can't imagine the performance differences would be significant. But I can explore that if you want. Here's a test case, a bunch of cubes that get de-duplicated down to a single one. Link to ZIP of blend and GLTF files Note: When turning particles into meshes, Blender is smart enough to use the same Object Data for all of them, and de-duplication isn't necessary after exporting GLTF. I had to force them all as unique geometries in this test. However, I've run into other situations where deduplication is important—certain workflows and editing approaches (e.g. using Duplicate Object, or exporting from Maya) can lead to a lot of redundant geometry. |

|

I still think hash is better than array because that each geometry searches in array isn't scalable against distinct geometries#, O(N^2). (I assume this option is always on. And yeah sorting would be sort of problem for performance tho...). But such optimization could have done later. Array first would be ok for now. |

Agreed. No need to have an |

|

Ok, thanks for the feedback everybody. I've enabled this by default (no need for |

|

Ops! Sorry, seems like another PR caused some conflicts. Do you mind resolving them? |

|

Ok, I've merged with latest dev and I'm using if/else now instead of early breaking/continuing the loop. |

|

Thanks @mattdesl! @donmccurdy looks good then? |

|

This looks good to me. 🙂 For my own curiosity (and this doesn't need to block merging) is there a difference between reusing an entire |

|

Hmm... yes. Currently, I think the renderer avoids reuploading buffer attributes if the geometry and material are the same as the previous object rendered. |

|

Thanks! |

|

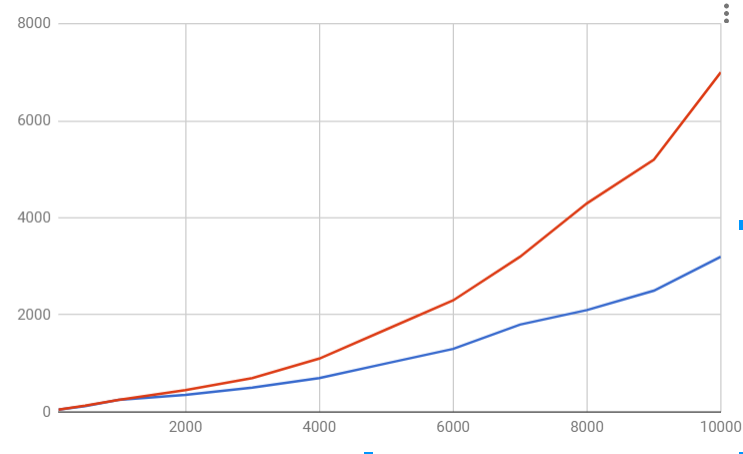

Let me share the evaluation about performance dependency on distinct primitives#. (distinct primitives means the ones that can't be reused because of different attributes) The conclusion is it doesn't matter in general.

*1, *2: Elapsed time of *1: To simulate 'w/o this change', I added three.js/examples/js/loaders/GLTFLoader.js Lines 1213 to 1215 in 8732d13 *2: To simulate 'w/ this change' with distinct primitives, I added three.js/examples/js/loaders/GLTFLoader.js Lines 1191 to 1193 in 8732d13 |

|

The complexity of the part I mentioned above is O(n^2 * m) where n is distinct pritmitives# and m is keys# in an |

|

Nice writeup, thanks @takahirox!

The other condition is that the reused primitive be simple; for complex primitives this might skew the other way. And the loading time is a secondary concern to runtime performance here, where this PR does improve things nicely.

Open to trying other versions of |

|

Yup I will but feeling like trying to find better solutions because I know that sorting idea isn't the best solution. |

|

I'm thinking of updating glTF spec (in next version) to allow shared primitives among meshes rather than trying algorithm optimization.

|

|

Worth discussing, maybe in KhronosGroup/glTF#1249 would be better 👍 |

|

Oops, I've just posted a wrong thread by my misoperation. Thanks for the right thread. |

|

I just realized that more precisely we should also check |

This feature adds simple BufferGeometry caching to GLTFParser, based on the

primitivesobject (looking atattributesandindicesto see if all keys line up).If GLTF is exported or post-processed to de-duplicate matching geometries, you might end up with a

meshesdefinition like this:Both of these cubes have different materials, but point to the same index & vertex data. With this PR, because all the attributes/indices line up between the two primitives, they will end up using a single BufferGeometry. In large scenes with a lot of duplicated geometry (e.g. a city with tons of repeated buildings and trees of different scales), you can end up with huge loading/rendering benefits because you are dramatically cutting down the total geometries loaded onto the GPU.

This PR is really only useful if you post-process your GLTF script — I have a node script below, but perhaps one day this sort of feature will end up in Blender's exporter, or in more standard tools like gltf-pipeline.

GLTF de-duplication Node.js script

By default, I've enabled this feature, but the user can turn it off by passing an

optionsobject into the second argument ofGLTFLoaderconstructor. I am not sure whether you would prefer it on or off by default.