Conversation

| obs_ph = tf.placeholder(tf.float32, (None, ) + obs_dim, name="obs") | ||

|

|

||

| self.model.build(obs_ph) | ||

| with tf.variable_scope(self._variable_scope): |

There was a problem hiding this comment.

self._variable_scope is a VariableScope object, we have to do

with tf.variable_scope(VariableScopeObject):to reenter the scope.

(see https://github.com/tensorflow/tensorflow/blob/master/tensorflow/python/ops/variable_scope.py#L1804).

Also VariableScope is pickleable, variable_scope is not.

| out = model.build(out) | ||

|

|

||

| def q_vals(self): | ||

| return self.models[-1].networks['default'].outputs |

There was a problem hiding this comment.

you mean store q_vals in a dict? or storing the models in a dict?

There was a problem hiding this comment.

the models. if the order of insertion changes at any time, your code will break.

you can also just keep the models in instance variables (self.model1 etc.) and also add them to the list self.models...

| strides=(4, 2, 1), | ||

| dueling=False) | ||

|

|

||

| policy = DiscreteQfDerivedPolicy(env_spec=env, qf=qf) |

| algo.train(sess) | ||

|

|

||

|

|

||

| run_experiment( |

There was a problem hiding this comment.

Please update this with LocalRunner

| num_timesteps=num_timesteps, | ||

| qf_lr=1e-4, | ||

| discount=1.0, | ||

| min_buffer_size=1e3, |

There was a problem hiding this comment.

Have you added an int() call to min_buffer_size?

|

|

||

| replay_buffer = SimpleReplayBuffer( | ||

| env_spec=env.spec, | ||

| size_in_transitions=int(10000), |

| qf = DiscreteMLPQFunction( | ||

| env_spec=env.spec, hidden_sizes=(64, 64), dueling=False) | ||

|

|

||

| policy = DiscreteQfDerivedPolicy(env_spec=env, qf=qf) |

| qf_lr=0.001, | ||

| qf_optimizer=tf.train.AdamOptimizer, | ||

| discount=1.0, | ||

| name=None, |

| num_filters, | ||

| strides, | ||

| name=None, | ||

| padding="SAME", |

There was a problem hiding this comment.

I think it's better to use single quotations for new files.

| filter_dims, | ||

| num_filters, | ||

| strides, | ||

| name=None, |

There was a problem hiding this comment.

Model has a default name if name=None, which will be the class name. How should we do this? If we enforce all derived model class to have name, we don't need the default name at all.

There was a problem hiding this comment.

Seems like you have already violated the Model interface by making name an kwarg:

garage/garage/tf/models/base.py

Line 159 in 123efde

There was a problem hiding this comment.

oh yes, so a name is actually required.

| for model in self.models: | ||

| out = model.build(out) | ||

|

|

||

| def q_vals(self): |

|

|

||

|

|

||

| class TestDQN(TfGraphTestCase): | ||

| def test_dqn_cartpole(self): |

There was a problem hiding this comment.

How long does it take to run this test?

| episode_rewards.append(0.) | ||

|

|

||

| for itr in range(self.num_timesteps): | ||

| with logger.prefix('Iteration #%d | ' % itr): |

There was a problem hiding this comment.

'Timestep' sounds more appropriate to me.

| self._dueling = dueling | ||

|

|

||

| obs_dim = self._env_spec.observation_space.shape | ||

| action_dim = env_spec.action_space.flat_dim |

There was a problem hiding this comment.

self._env_spec.action_space.flat_dim

| @@ -0,0 +1,190 @@ | |||

| """Discrete MLP QFunction.""" | |||

There was a problem hiding this comment.

We should state this CNN network actually supports CNN2MLP. This is not the same as the CNN* primitive in the current garage. If you think the naming is ok, please add more details to this documentation.

| out = model.build(out, name=name) | ||

| return out | ||

|

|

||

| def clone(self, name): |

There was a problem hiding this comment.

Should clone interface be in the base class?

There was a problem hiding this comment.

Yes, it will eventually be an interface in Model too.

There was a problem hiding this comment.

Please update it into the base class.

| size_in_transitions=int(5000), | ||

| time_horizon=max_path_length) | ||

| qf = DiscreteMLPQFunction(env_spec=env.spec, hidden_sizes=(64, 64)) | ||

| policy = DiscreteQfDerivedPolicy(env_spec=env, qf=qf) |

| @@ -0,0 +1,55 @@ | |||

| """ | |||

| This script creates a test that fails when garage.tf.algos.DDPG performance is | |||

ryanjulian

left a comment

ryanjulian

left a comment

There was a problem hiding this comment.

Excellent work. +1000

Please address other reviewers' comments, rebase, and submit!

3890c4d to

5b95308

Compare

Codecov Report

@@ Coverage Diff @@

## master #582 +/- ##

=========================================

+ Coverage 62.25% 63.35% +1.1%

=========================================

Files 163 169 +6

Lines 9532 9786 +254

Branches 1267 1284 +17

=========================================

+ Hits 5934 6200 +266

+ Misses 3288 3269 -19

- Partials 310 317 +7

Continue to review full report at Codecov.

|

|

Fixed all comments above. I will add more tests. |

b2983a1 to

b5d8ff4

Compare

2dac995 to

4dc7abb

Compare

| # Select which episodes to use | ||

| time_horizon = buffer["action"].shape[1] | ||

| rollout_batch_size = buffer["action"].shape[0] | ||

| time_horizon = buffer['action'].shape[1] |

There was a problem hiding this comment.

Is it still valid to have time_horizon since the replay buffer now stores variable-length episodes? It may beyond the scope of this PR. I just think the interface seems a bit confusing now.

There was a problem hiding this comment.

Is it because there is no max_path_length anymore in off policy algos?

CatherineSue

left a comment

CatherineSue

left a comment

There was a problem hiding this comment.

I only have some minor comments.

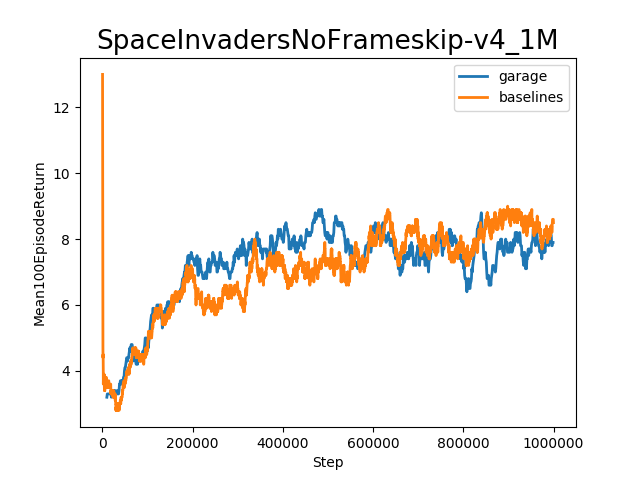

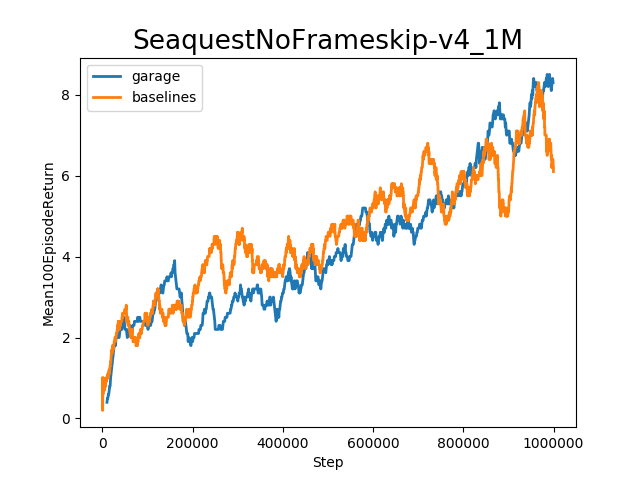

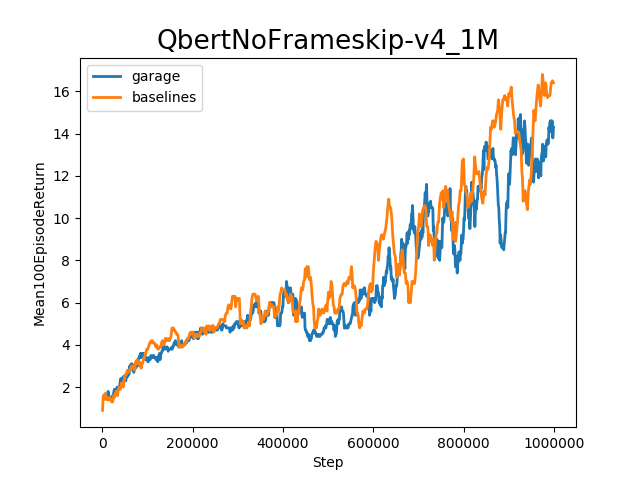

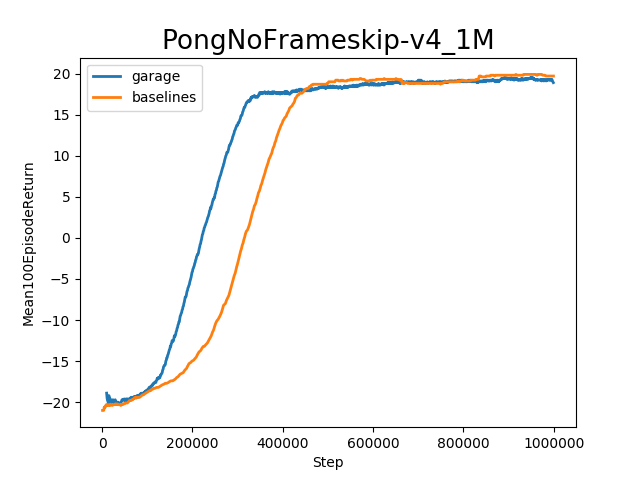

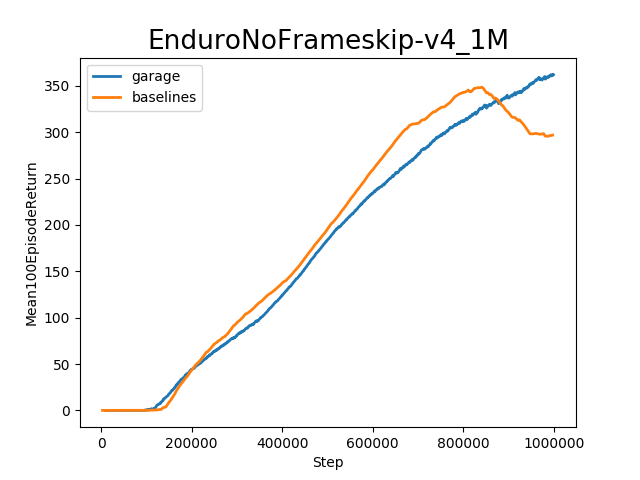

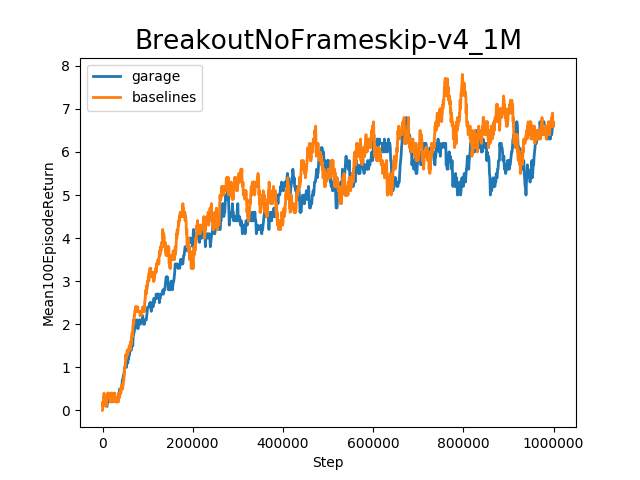

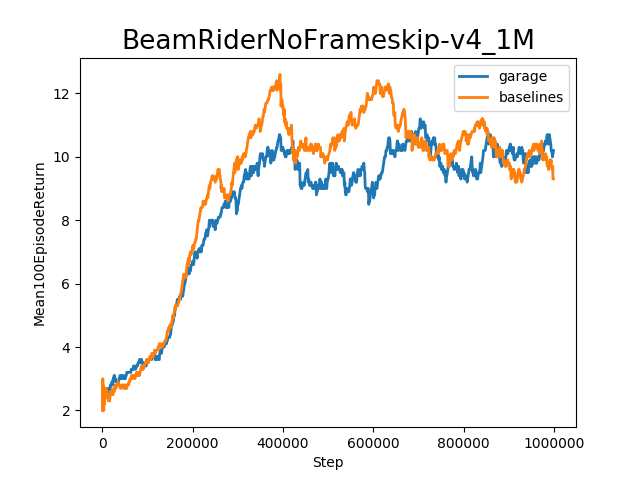

DQN implementation with garage.Model. This is the first algorithm for pixel environments. This PR adds the algorithm as well as the models, primitives and environment wrappers required for training in pixel environments. * Models * MLPDuelingModel * CNNModelWithMaxPooling * Primitives * QFunction2 (base class, without parameterized) * DiscreteCNNQFunction * Wrappers * AtariEnvWrapper (needed when using env wrappers from baselines) The eviction policy of replay buffer used to be random. To make experiments determinisitic, it is changed to First In First Out (FIFO). It was proven to be necessary in order to achieve better result in complex environment for DQN. Added corresponding properties in QFunction to make reference easier, e.g. q_vals. Added clone method in QFunction to enable copying configuration, not including the parameters. Since all the necessary operations will be done in object construction, we can simply create a new object with a different name (because we want the new object to have a different name for variable scope).

self.modelsto store all the models in QFunction. Sometimes there are multiple models and we need something to keep track of them. I assume the models are stored in order, so input must beself.models[0].inputand output must beself.models[-1].output.def q_vals()andinputproperty.Will post benchmark result soon, fixing the scaling.

Will add more tests later.