Progress-Aware Video Frame Captioning

Zihui Xue, Joungbin An, Xitong Yang, Kristen Grauman

CVPR, 2025

project page | arxiv | bibtex

This codebase builds upon the excellent LLaVA-NeXT repository. We deeply appreciate their efforts in open-sourcing!

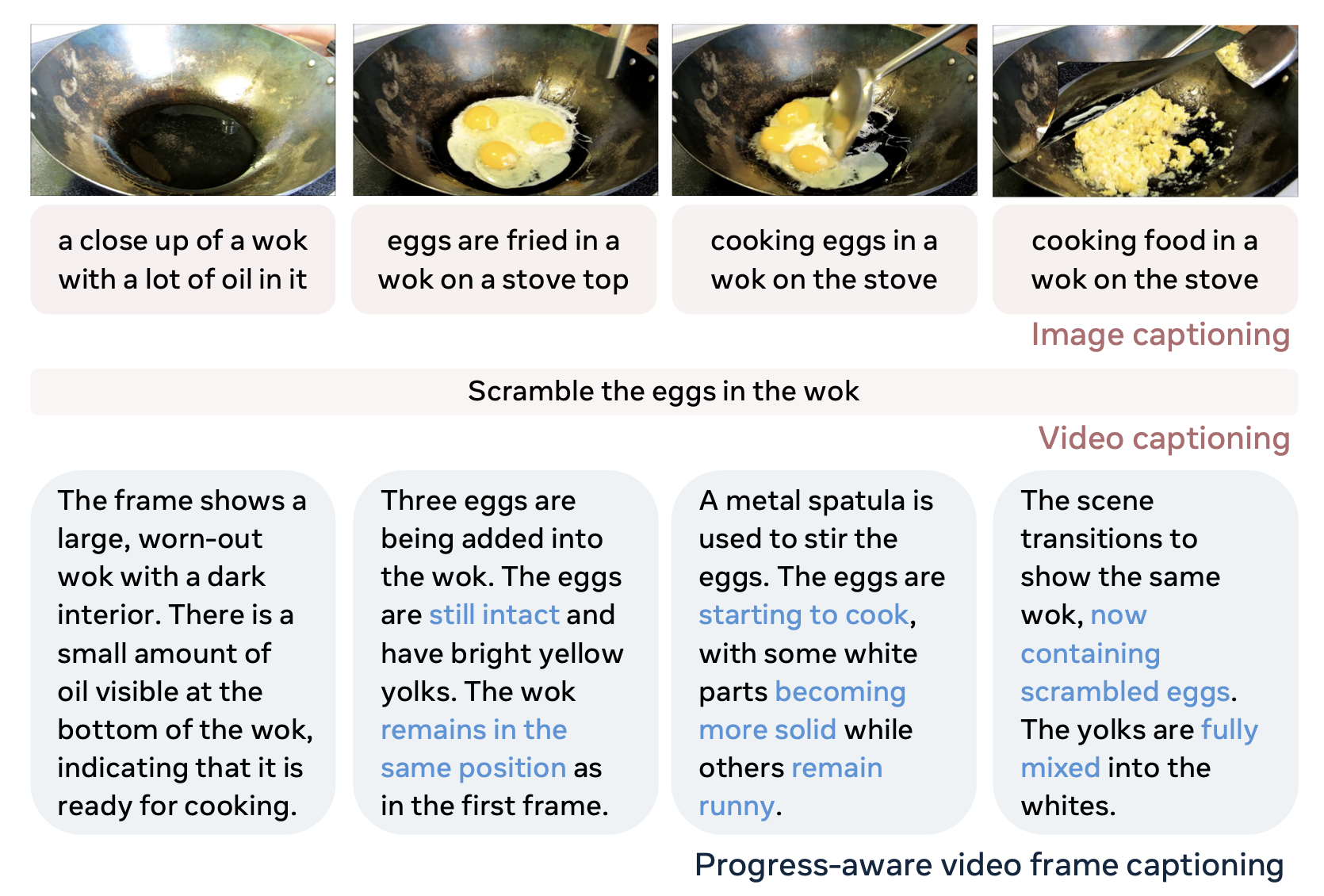

We propose progress-aware video frame captioning (bottom), which aims to generate a sequence of captions that capture the temporal dynamics within a video.

conda create -n progcap python=3.10 -y

conda activate progcap

cd LLaVA-NeXT

pip install -e ".[train]"

pip install flash-attn==2.6.3- We provide pre-processed frame sequences for the benchmarks used in our paper:

- HowToChange:

data/data_files/input/htc_valhs_seq.json - COIN:

data/data_files/input/coin_valhs_seq.json - These frames are extracted at 1fps, filtered, and manually verified for assessing fine-grained action progress. Download the frames here, and place them under the

data/folder. Ensure the paths match those expected by the JSON files or update the JSONs accordingly.

- To run inference on your own video data:

- Run

python prepare_data.py --video_file VIDEO_FILE(adjust arguments as needed) to extract frames from video and create a JSON file likedata/data_files/input/one_example.json.

- ProgressCaptioner model checkpoint is available here.

Run the inference script using the prepared data file and the downloaded model checkpoint:

python infer.py --data_file DATA_FILE --model_path ProgressCaptionerCheckpoint-

Input:

DATA_FILEshould be indata/data_files/input/directory, examples:data/data_files/input/one_example.json,data/data_files/input/htc_valhs_seq.json. -

Output: captions are printed and a response file will be saved in

data/data_files/outputdirectory.

We provide post_process.py to visualize the generated captions alongside the frames in an easy-to-browse HTML format.

-

The generated HTML files will be saved in

data/viz_html/directory. -

Start a simple web server from the repository's root directory:

python -m http.server 8000 (or any available port), then navigate to http://localhost:8000/data/viz_html/ in your browser.

ProgressCaptioner training data is available here. Videos are sourced from COIN and HowToChange datasets. For HowToChange, you may retrieve videos directly from YouTube using their unique IDs. The start time and duration can be inferred from the filename (look for st and dur).

Next, to prepare the data, extract video frames at 1 FPS (the extract_frames function in prepare_data.py may be helpful).

We follow the LLaVA-NeXT training pipeline for SFT and DPO with the LLAVA-OV-7B model. Modify the training scripts (fineune_ov.sh, dpo_ov7b.sh) as needed to launch ProgressCaptioner training.

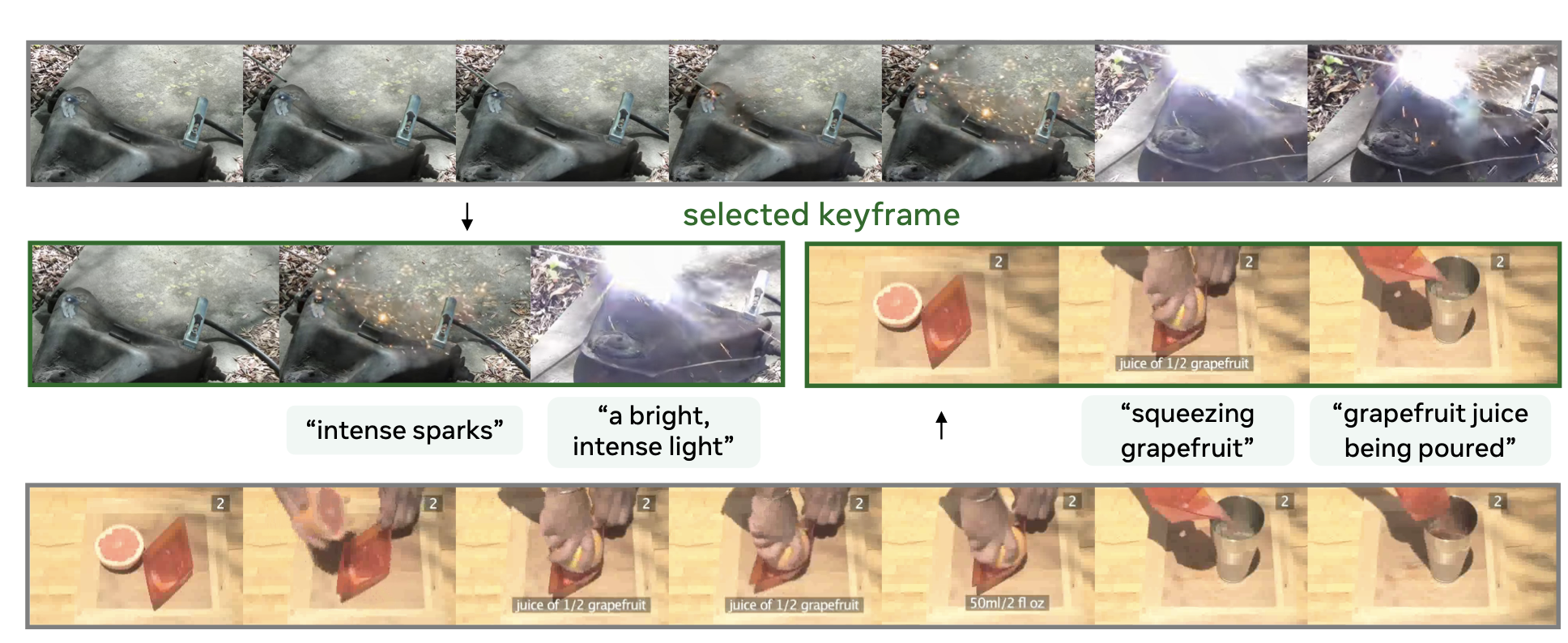

ProgressCaptioner can also be used to identify informative keyframes within a video sequence.

🚧 Code and Instructions Coming Soon

If you find our work inspiring or use our codebase in your research, please consider giving a star ⭐ and a citation.

@article{xue2024progress,

title={Progress-Aware Video Frame Captioning},

author={Xue, Zihui and An, Joungbin and Yang, Xitong and Grauman, Kristen},

journal={arXiv preprint arXiv:2412.02071},

year={2024}

}